Let’s be honest. If you’re building an application in the health-tech space, you’re not just moving bits and bytes. You’re handling people’s lives. Your code—and the data that feeds it—is the thin line between a user achieving a health goal and a user having an allergic reaction. Between a patient trusting your app and a patient’s doctor telling them to delete it.

Every CTO, founder, and data scientist evaluating a food API says they care about data accuracy. It’s table stakes. Yet, the industry has a dirty secret: most providers are playing a dangerous shell game with their data sources. They present a unified, clean-looking endpoint, but behind the curtain, it’s a chaotic mix of scraped retail sites, unverified user submissions, and data that hasn’t been updated since the last presidential election.

The biggest danger in health-tech isn’t a 404 error; it’s a silent, subtle inaccuracy that poisons your entire product. It’s the data from crowdsourced repositories like Open Food Facts or the user-generated chaos of a MyFitnessPal, where a single user can list a Snickers bar as having 10 grams of protein and zero sugar. Relying on this kind of data to power a professional application is like building a hospital on a foundation of sand.

This isn’t just a technical problem. It’s a trust problem. And in our business, trust is the only currency that matters.

This guide isn’t a sales pitch. It’s an evaluation framework. It’s the conversation we believe every development team should be having before they write a single line of code that depends on an external food API. It’s about asking the hard questions, so you don’t have to answer for the consequences later.

Why food nutrition data is harder to get right than it looks

On the surface, it seems simple. A product has a nutrition label. You read the label, put the data in a database, and expose it via an API. The end. If it were that easy, every food API would be perfect, and we wouldn’t be having this conversation.

The reality is that the global food supply chain is a sprawling, entropic system. Food nutrition data accuracy isn’t a static target; it’s a moving, complex challenge that requires a sophisticated, multi-layered approach to solve.

Consider the principle of Garbage In, Garbage Out (GIGO). In machine learning, it means a flawed dataset will always produce a flawed model. In health-tech, the stakes are higher. A flawed data pipeline can lead to incorrect dietary recommendations, missed allergen warnings, and a complete erosion of user trust.

Here are just a few of the complexities that make this problem so challenging:

- Regional Variations: The same product from a global brand like Nestlé or Kraft Heinz can have a different formulation—and thus a different nutrition profile—in the United States versus the European Union. The FDA and the EFSA have different labeling requirements, rounding rules, and even definitions for what constitutes a “serving.” A simple UPC lookup isn’t enough; you need geographic and regulatory context.

- Constant Formulation Changes: Food manufacturers are constantly tweaking their recipes. They might switch from sugar to high-fructose corn syrup, replace one type of oil with another to cut costs, or add a new vitamin blend to make a health claim. Each change invalidates the existing nutrition data. Your API is only as good as its ability to detect and ingest these changes in near real-time.

- The Scale of the Problem: There are millions of unique food products (UPCs) on the market at any given time, with tens of thousands of new products introduced each year. Manually curating this data is impossible. Building a system to automate its collection, verification, and maintenance is a monumental engineering and data science challenge.

- Ambiguity and Inconsistency: Is “Light Cream” the same as “Half-and-Half”? How do you classify a product that’s both “Organic” and “Gluten-Free”? The hierarchical classification of food, known as ontology, is a deeply complex field. Most APIs take shortcuts, leading to poor search results and incorrect categorization.

Treating food nutrition data as a simple key-value store is the first, and most critical, mistake a developer can make. It’s a living, breathing dataset that demands a rigorous, defense-in-depth approach to maintain its integrity.

The 4 sources of food data errors

Every incorrect data point in your application can be traced back to a source. Understanding these sources of failure is the first step in building a resilient system. In our analysis, errors almost always originate from one of four areas.

1. Manufacturer Error

It’s tempting to treat the information printed on the physical package as infallible ground truth. It isn’t. Manufacturers, despite their best efforts and QA processes, make mistakes. Data entry clerks have typos. Rounding rules are misapplied. A decimal point gets misplaced. While rare, these errors at the very source can be pernicious because they appear authoritative. A robust data verification system doesn’t blindly trust manufacturer data; it cross-references it against expected nutritional ranges for that food category and flags statistical outliers for human review.

2. Update Lag

This is the most common and insidious source of inaccuracy. A manufacturer reformulates a popular cereal to reduce its sugar content by 15%. They print new packaging, and the new version hits store shelves. But your food data API is still serving the old data. For a diabetic user carefully managing their sugar intake, your app is now providing dangerously incorrect information. The time it takes for an API to reflect a real-world product change—the data latency—is a critical performance indicator. For many APIs that rely on periodic, manual, or scraped updates, this lag can be months, or even years. In the world of health, that’s an eternity.

3. OCR Scanning

To build their databases, many services turn to Optical Character Recognition (OCR) technology, often powered by users snapping photos of nutrition labels with their phones. While modern OCR is impressive, it’s far from perfect, especially under real-world conditions like poor lighting, wrinkled packaging, or strange fonts. A crinkle in a package can make a ‘3’ look like an ‘8’. A shadow can turn ‘6g’ of fat into ‘8g’. These aren’t just minor discrepancies; they are fundamental corruptions of the data that can have a cascading effect on your application’s calculations and recommendations.

4. User-Submitted Data

This is, without question, the single greatest threat to food nutrition data accuracy. APIs that build their databases on the back of user-submitted or crowdsourced content are choosing breadth at the expense of reliability. The incentives are all wrong. A user’s goal is to quickly log their meal, not to painstakingly ensure the data they’re entering is 100% correct for all future users. This leads to a database filled with:

- Incomplete Entries: Users log calories and macros but ignore micronutrients.

- Typographical Errors:

50gof protein instead of5.0g. - Personalized Names: “Mom’s Sunday Lasagna” instead of the actual product name.

- Outright Vandalism: Malicious or joke entries.

Building a mission-critical health application on a foundation of user-submitted data is professional malpractice. It outsources your core responsibility—data integrity—to an anonymous, unaccountable, and unvetted crowd.

How food APIs verify their data: scraped vs submitted vs lab-verified

Not all data is created equal. The method an API provider uses to acquire its data is the most telling indicator of its quality. This is its provenance. When you’re evaluating an API, you need to look past the marketing claims and ask one simple question: “Where does the data actually come from?” The answer will fall into one of three categories.

The Low Tier: Scraped Data

This is the bottom of the barrel. The provider writes scripts (spiders) that crawl the websites of grocery stores and retailers, pulling product information from public-facing web pages. This approach is fraught with problems:

- Brittleness: A simple website redesign can break the scraper, causing data flow to cease without warning.

- Inaccuracy: Retailer websites are marketing tools, not technical databases. They often contain typos, outdated information, or promotional copy instead of hard data.

- Legal Risk: Many websites explicitly forbid scraping in their terms of service. Building your business on data acquired in this manner is a significant legal and operational risk.

The Mid Tier: Submitted (Crowdsourced) Data

This is the model used by many popular consumer apps and the APIs derived from them. As discussed, they rely on their user base to populate the database. The sales pitch is “the world’s largest food database,” but it’s a mile wide and an inch deep. The sheer volume of data hides a chaotic lack of consistency, verification, and reliability. For a startup trying to prove its value, using this data is a false economy. You save money on API costs but pay for it tenfold in customer support tickets, user churn, and reputational damage when the data is inevitably wrong.

The Professional Tier: Direct & Verified Data

This is the only acceptable standard for a serious health-tech application. In this model, data is sourced directly from the most authoritative entities possible:

- Direct Manufacturer Feeds: The provider establishes official data partnerships with food manufacturers, who provide structured, accurate data for their products directly. This is the ground truth.

- Regulatory Databases: The provider integrates with government and regulatory body databases, like the USDA FoodData Central. This provides a baseline of verified, standardized data, especially for generic ingredients and commodities.

- Controlled Curation: For any remaining gaps, the provider employs a team of trained nutritionists and data specialists to research and enter data, following a strict, multi-step verification protocol.

This approach prioritizes quality over quantity. The database may not have every obscure item from a local farmer’s market, but for the 99.9% of products your users are consuming, the data is accurate, traceable, and trustworthy.

What a data confidence score actually means

Talk is cheap. Any API provider can claim their data is accurate. The truly transparent ones prove it. They provide metadata that allows you, the developer, to understand the provenance and reliability of each and every data point. The most powerful form of this metadata is a confidence score.

A confidence score is a quantitative measure of the API provider’s certainty in the accuracy of a given piece of data. It’s an admission that not all data is perfect and a tool that empowers you to handle it intelligently.

Imagine you query an API for a UPC and get a result. Without a confidence score, you’re flying blind. Was this data sourced from the manufacturer yesterday, or was it scraped from a random blog five years ago? You have no idea.

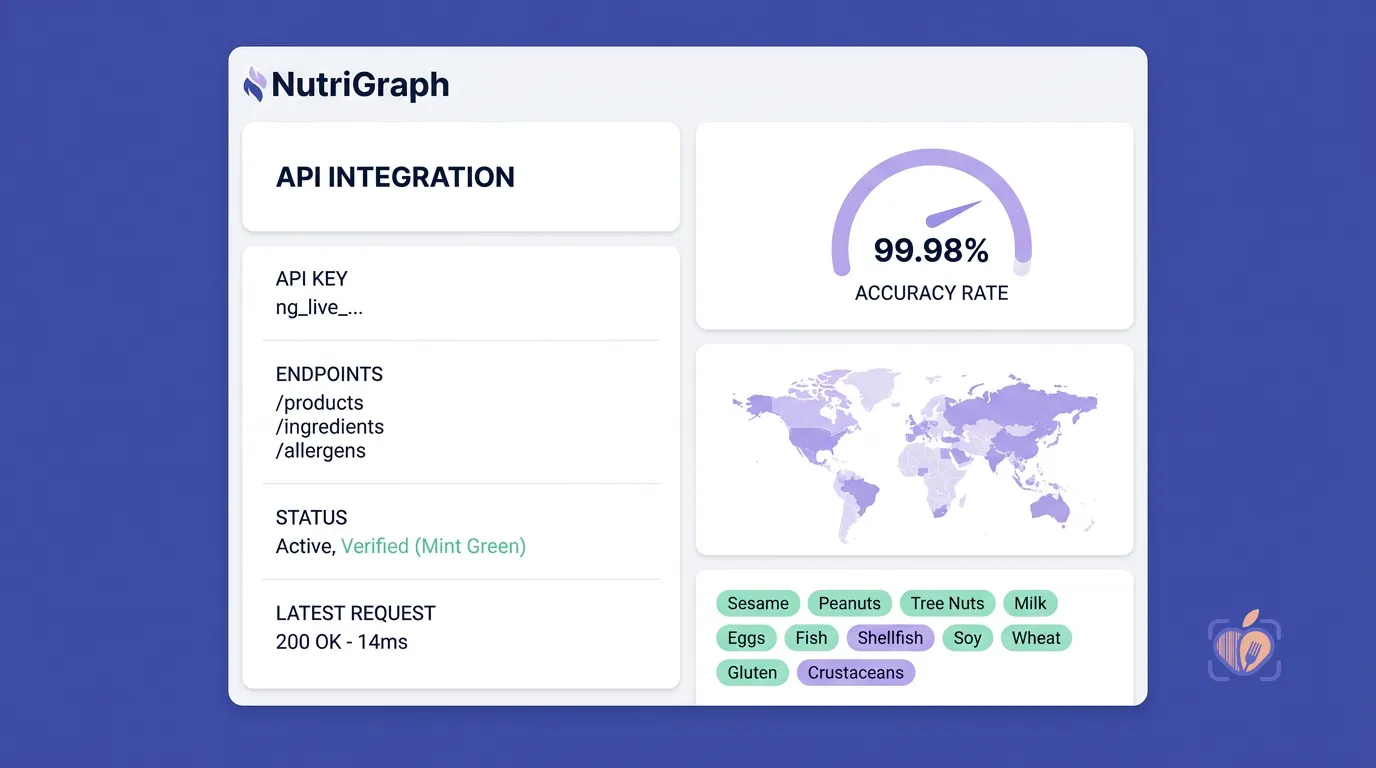

Now, imagine the same query to NutriGraphAPI. The JSON response includes a specific attribute:

{

"upc": "016000275287",

"product_name": "Cheerios",

"brand": "General Mills",

"nutrients": [

{

"name": "Calories",

"amount": 140,

"unit": "kcal"

},

{

"name": "Protein",

"amount": 5,

"unit": "g"

}

],

"verification_level": "manufacturer_direct",

"last_updated": "2023-10-26T12:00:00Z",

"confidence": 0.98

}

This changes everything. That "confidence": 0.98 attribute isn’t just a number; it’s a contract. It tells you a story about the data.

At NutriGraphAPI, this score means:

0.95 - 0.99: Data sourced directly from a manufacturer’s API or a primary regulatory database within the last 90 days. Highest level of trust.0.85 - 0.94: Data verified by our internal AI models, cross-referencing multiple reliable sources (e.g., top-tier retailers + nutritional databases).0.70 - 0.84: Data sourced from a single, trusted secondary source. Reliable, but flagged for secondary verification.< 0.70: Data is considered uncertain, potentially from an unverified source or is significantly aged. We generally don’t serve this data via our primary endpoints, but its presence in our system triggers a re-verification task.

This allows you to build smarter, more resilient applications. You can set a threshold in your code to only display nutrition information with a confidence score above 0.90. You can flag lower-confidence items for your users, perhaps with a message like, “Nutritional information is estimated.” You are no longer a passive consumer of data; you are an active participant in ensuring its quality.

If an API provider cannot or will not provide this level of transparency, ask yourself: what are they hiding?

Questions to ask any food data API provider before signing up

Before you commit your product, your reputation, and your users’ well-being to a third-party API, you must conduct your due diligence. Treat it like a technical interview for a critical hire. Here is your evaluation checklist. Any provider worth their salt will have immediate, clear, and verifiable answers to these questions.

-

Data Provenance: “For a given, common UPC like Coca-Cola, can you walk me through the exact provenance of your nutrition data? Is it from a direct feed, a regulatory body, or a scraped source?”

-

Data Latency: “What is your average and maximum data latency? When a manufacturer updates a product’s formula, what is your guaranteed SLA for that change to be reflected in your API’s production environment?”

-

Source Composition: “What percentage of your database is populated by a) direct manufacturer/regulatory feeds, b) internal curation, c) OCR/automated scanning, and d) user-submitted content?”

-

Error Handling & Verification: “How do you detect and correct errors, whether they originate from the source or your own ingestion pipeline? Do you have an automated anomaly detection system?”

-

Allergen & Recall Data: “How do you track and ingest FDA/EFSA recall and allergen alert data? Is this data linked directly to the affected UPCs, and how quickly is it available via the API?”

-

Confidence & Transparency: “Do you provide a per-item confidence score or a similar data quality metric in your API response? Can I filter API calls based on this score?”

-

Ontology and Classification: “How do you classify and categorize foods? Are you using a standardized ontology (like FoodOn), or is it a proprietary system? How do you handle ambiguous product types?”

Their answers—or lack thereof—will tell you everything you need to know about their commitment to food nutrition data accuracy.

How often is the data updated? Why freshness matters for allergen compliance

In many areas of software, data can be relatively static. For food nutrition, data is ephemeral. Its value decays over time. Data freshness isn’t a “nice-to-have”; it’s a core requirement for any application that deals with health, and especially with allergens.

Consider the Food Allergen Labeling and Consumer Protection Act (FALCPA) in the US. It mandates that labels clearly identify the presence of any of the major food allergens. Manufacturers can, and do, change their production lines and ingredient suppliers. A product that was once peanut-free might suddenly be processed in a facility that also handles peanuts, requiring a new “may contain peanuts” warning.

For a user with a severe allergy, this is a life-or-death distinction.

If your API’s data is stale, your application transforms from a helpful tool into a dangerous liability. A user with celiac disease trusts your app to identify gluten-free products. A parent trusts your app to help them find snacks that are safe for their child with a dairy allergy. If your data is six months out of date, you are breaking that trust in the most profound way possible.

This is why real-time ingestion of regulatory alerts, like the FDA’s recall database, is non-negotiable. A truly professional-grade food API doesn’t just provide nutritional data; it provides a safety and compliance layer. When a recall is issued for a specific batch of a product due to an undeclared allergen, that information should be programmatically available within hours, not weeks. The API should allow you to query not just by UPC, but to check against active recall and alert databases.

When you evaluate a provider, don’t just ask if they update their data. Ask how fast. Ask them to prove it.

NutriGraphAPI’s data verification stack: Manufacturer API + Regulatory Database + AI Verification

We didn’t build NutriGraphAPI to be another food database. We built it to be an authoritative, trustworthy source of truth for developers building the future of health.

Our entire architecture is designed around a single principle: defense in depth against inaccurate data. We don’t rely on any single source. Instead, we’ve built a multi-layered, self-healing data verification stack.

1. The Foundation: Manufacturer & Retailer Direct APIs

Our primary data ingestion pipeline connects directly to the source. We maintain formal data-sharing partnerships with hundreds of major CPG manufacturers and top-tier grocery retailers. We receive structured, real-time data feeds directly from their internal systems. This is our ground truth, the bedrock of our database. It dramatically reduces update lag and eliminates the errors associated with scraping and manual entry.

2. The Compliance Layer: Regulatory Database Integration

We continuously ingest and cross-reference our data with major government and regulatory databases, including the USDA FoodData Central and the EFSA Food Composition databases. This allows us to standardize data, validate manufacturer-provided information against a trusted baseline, and enrich our dataset with standardized units and micronutrient information that manufacturers might not provide.

3. The Intelligence Layer: AI-Powered Verification

This is our secret sauce. Every single data point that enters our system is analyzed by a suite of proprietary machine learning models. This AI layer acts as a tireless, 24/7 QA team:

- Anomaly Detection: Our models are trained on the entire database to understand what’s “normal.” If a new data point for a yogurt claims it has 50 grams of fat per serving, the system automatically flags it as a statistical outlier for human review.

- Cross-Source Reconciliation: When we have data for the same UPC from multiple sources (e.g., the manufacturer and a major retailer), our AI compares them, identifies discrepancies, and uses a weighted algorithm to determine the most likely correct value, raising the confidence score.

- Predictive Freshness: Our system analyzes update velocity across brands and categories to predict when a product’s data is likely to become stale, proactively scheduling it for re-verification even before a change is announced.

This three-tiered stack ensures that the data you receive from the NutriGraphAPI endpoint isn’t just data. It’s verified, cross-referenced, and context-aware intelligence.

Choosing a food data API is one of the most important architectural decisions you will make as a health-tech leader. You’re not just choosing a vendor; you’re choosing a partner in building user trust. Don’t settle for the convenient fiction of a massive, crowdsourced database. Demand transparency. Demand accountability. Demand proof.

Build your application on a foundation of verifiable truth.

Review NutriGraphAPI’s data verification methodology and explore our documentation at nutrigraphapi.com/docs.